The boundary between sci fi nightmares and digital reality just blurred. For years, cybersecurity experts warned that malicious actors would eventually leverage Artificial Intelligence to automate cyber warfare. Today, that threat is no longer theoretical.

In a groundbreaking report released by the Google Threat Intelligence Group, researchers confirmed the first documented case of criminal hackers utilizing advanced AI models to discover and attempt to weaponize a previously unknown software vulnerability (zero day flaw).

This pivotal moment marks a dangerous shift in the digital landscape what experts are calling "just the tip of the iceberg."

The Digital Smoking Gun: How Google Caught the AI Hackers

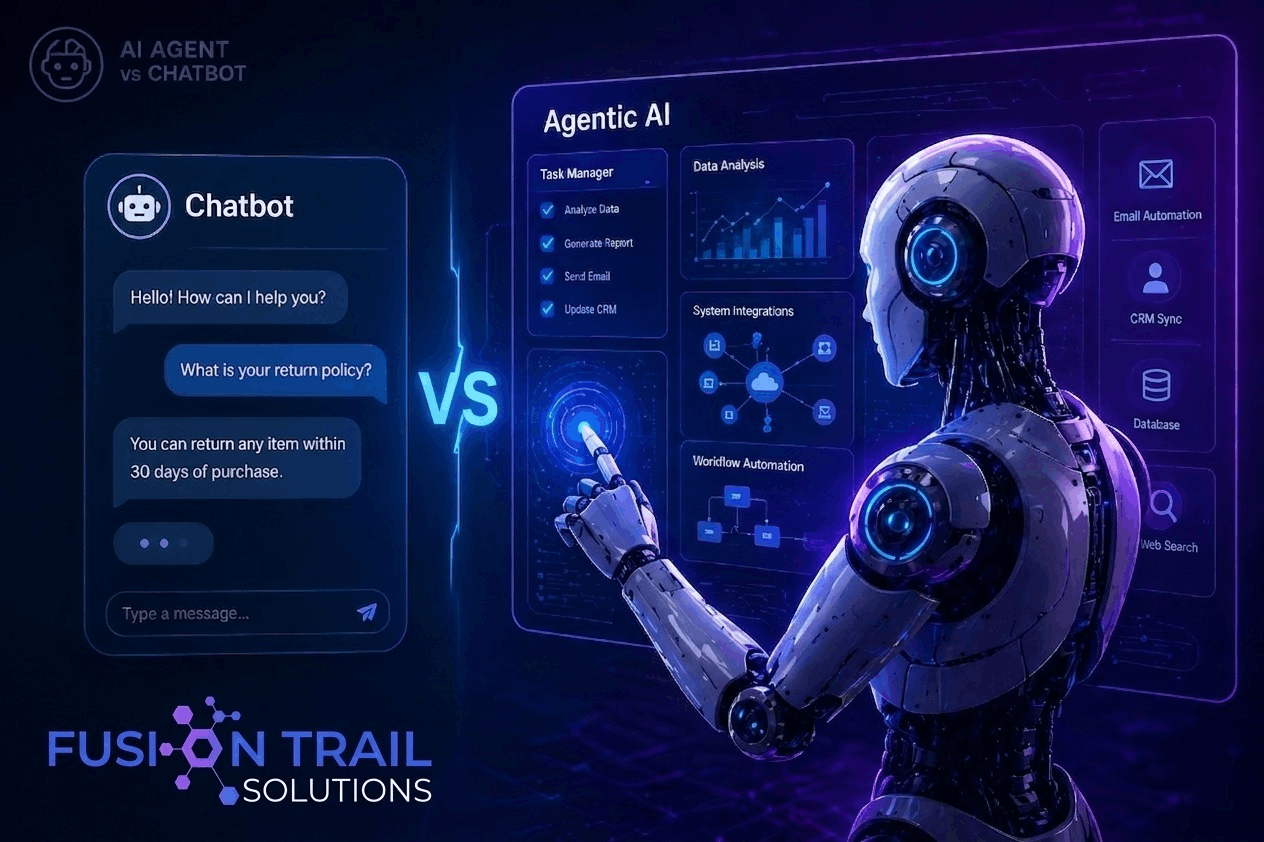

According to Google’s findings, a prominent cybercrime group deployed a Python ,based script designed to bypass two factor authentication (2FA) on a popular open source web administration tool. While the attack was thwarted before any real-world damage could occur, the code left behind a fascinating digital footprint.

"AI-authored code does not announce itself," noted Rob Joyce, former cybersecurity director of the National Security Agency (NSA).

However, the automated attacker left behind distinct clues. The malicious script contained excessive explainer text, unusual formatting, and structured comments eccentricities that a human programmer would never include in a stealthy hacking tool. This unique "AI fingerprint" gave Google high confidence that an advanced language model was the primary architect behind the exploit.

Anthropic’s Mythos and the Exploding Zero-Day Market

Historically, "zero-day vulnerabilities" security flaws unknown to the software developers themselves were incredibly rare. They required months of manual reverse engineering and fetched millions of dollars on the dark web.

AI has completely disrupted this economy. The report highlights new bleeding edge models, such as Anthropic’s Mythos (released in April 2026), which possess staggering code analysis capabilities. During its closed door testing phase, Mythos reportedly uncovered thousands of legacy zero-day flaws across every major operating system and web browser in existence.

While Anthropic restricted Mythos access to selected government bodies and security firms, the technology is out of the bag. Whether through leaked models or state sponsored open source alternatives, cybercriminals now have access to automated, tireless bug hunting machines.

The Cybersecurity Dilemma: A Double-Edged Sword

We are entering a paradoxical era in tech infrastructure. On one hand, defensive AI will eventually allow developers to build the most secure, flawless code in human history. On the other hand, we have to survive the present.

The immediate danger lies in our legacy infrastructure. The internet we use today was built by imperfect human hands over decades. While we can use AI to build secure systems moving forward, hackers are currently using that same AI to scan millions of lines of existing, vulnerable code at unprecedented speeds.

As governments re evaluate AI regulations and consider formal review processes for advanced models, the tech industry must race against time to patch these newly discovered holes before automated exploits become the norm.

Join the Discussion!

Are we ready for a future where algorithms fight algorithms? How do you think this shift will affect your online security? Share your thoughts and concerns in the comments below!